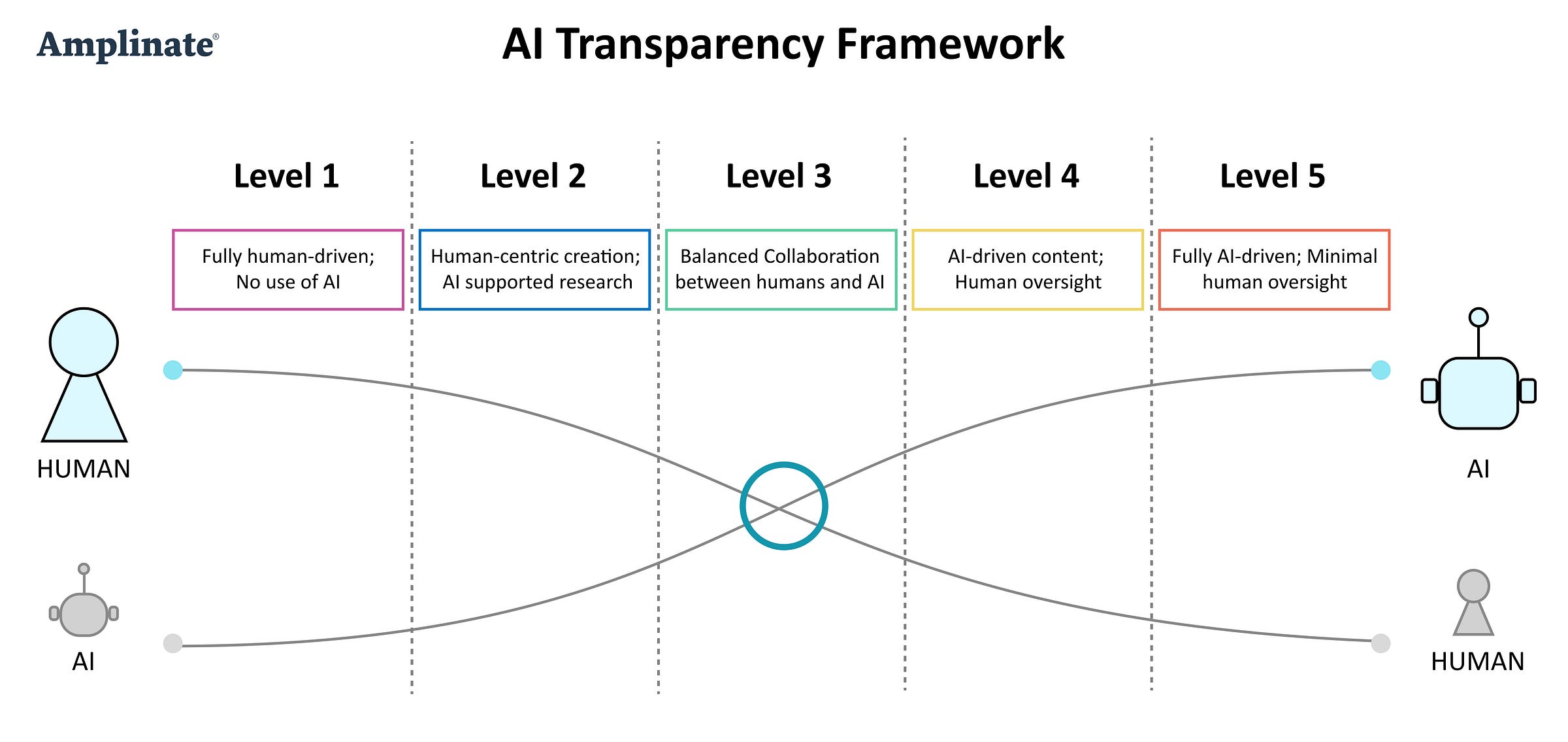

AI Transparency Framework

Deciding when and how to disclose our use of AI-based tools

Are we being honest about our use of AI?

AI is transforming how we create, but are we being transparent about its role? As AI-generated content becomes more sophisticated, we face critical questions:

At what point does AI assistance become dependence?

Should we disclose AI’s involvement in our work—and if so, when and how?

Does failing to disclose AI use erode trust, misrepresent expertise, or even cross ethical lines?

In my latest article, I introduce the AI Transparency Framework—a practical guide for assessing AI’s role in content creation, product development, research, and decision-making. This framework doesn’t judge AI use; instead, it promotes intentionality and openness, helping us define ethical norms before regulation forces our hand.

If you’re using AI (or working in a field where AI is becoming the norm), this is a conversation we all need to have. Read the full article here.

Let’s discuss! I’d love to hear your thoughts and keep this conversation going in this group. 🚀

Great discussion here and can appreciate the different levels of transparency that can be applied per task or process in end-to-end product lifecycle management.

As an AI product manager, I keep going back to defining this level of transparency based on the job-to-be-done (strategic epic), but more specifically, communicating value across teams based on the user story(epics and tasks).

I’ve experienced some resistance from software engineers in the startup space where this approach could restrict their creativity. What I’ve learned is if there’s no buying to the product vision or a shared culture of trust and transparency that aligns with the current business model, applying this systematic approach can be challenging….. especially if there’s no standard on efficient documentation across product, design, and engineering.